The importance of reliable artificial intelligence for strategic government relations

Artificial intelligence is increasingly present in government relations strategies, but not all AI is suitable for processes that demand precision, consistency, and a low risk of error. In political-regulatory environments, where decisions impact compliance, risk, and reputation, the use of AI needs to go beyond generic tools and focus on reliable, well-structured systems aligned with the organization's context.

If you work with government relations, compliance, or advocacy, follow this text to understand how reliable artificial intelligence can become a strategic differentiator in your day-to-day work.

1. What is reliability in AI?

In government relations, reliability means that the AI system operates correctly and consistently within a specific context, with few failures.

The longer a system can run stably, the more reliable it is.

The acceptable level of reliability can vary greatly depending on the application. For instance, in recreational contexts, such as generating artistic images via AI, an accuracy level of 33% might be sufficient, since out of every three images created, one can be used. But in critical sectors, like civil aviation, even 99% reliability is considered unacceptable, given the impact of a failure.

However, in regulatory and political processes, an error can impact strategic decisions and the company's risk.

Therefore, the AI used in this environment needs to offer high trust and control, not just “magic box” features.

2. AI is not a “magic box”: reliability depends on context

Artificial intelligence works based on probabilities and statistics, not fixed rules. This makes it flexible but also sensitive to context: small changes in data or environments can generate inconsistent responses.

This complexity explains why interactions with tools like ChatGPT can sometimes seem inconsistent. But advanced users know that they need to guide the AI with several questions, refining the context and criteria until a useful answer is reached. This happens because there is a constant trade-off between flexibility and precision.

Thus, when used without clear guidance, open tools like generic assistants can generate noise instead of actionable insights.

Also read our article "If the spreadsheet dies, we die with it” and discover how relying on spreadsheets and manual processes threatens continuity and compliance in Industry 4.0.

3. Why does high reliability make a difference?

In government relations, reliability is critical because decisions are based on high-impact legislative, political, and regulatory data. An unreliable AI system can distort information, generate false alerts, or ignore strategic initiatives.

Relying on poorly calibrated analyses or inaccurate summaries can compromise advocacy opportunities, compliance, and even the company's reputation. High reliability reduces uncertainty and increases the assertiveness of strategies.

4. Structuring AI for micro-activities

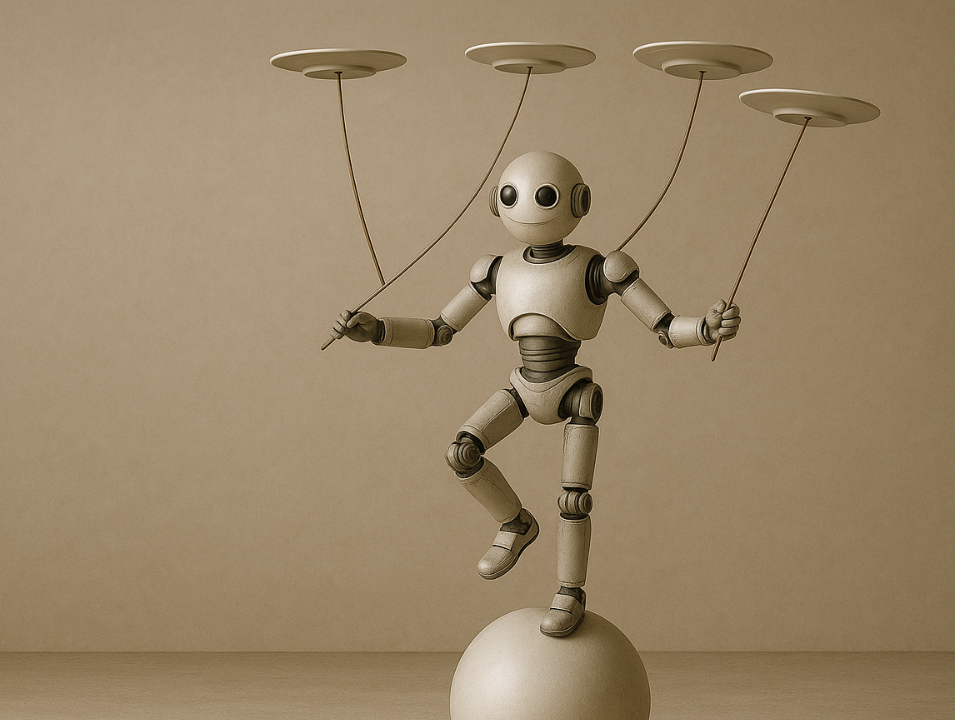

Merely using AI in generic interfaces is not enough. Just as welding robots in a factory perform well when operating within a clearly defined workflow, AI in government relations must be structured for specific micro-activities. This includes tasks such as:

- Identifying strategic themes.

- Filtering relevant legislative propositions.

- Generating standardized summaries.

- Sending precise alerts.

With each micro-activity, the context is fine-tuned, increasing the reliability of the set of actions.

Here, it is worth getting to know Sigalei's Reporting Agent. It transforms complex data into analyses and charts ready for dispatch, with high precision and automation.

5. How to use reliable AI in practice

To implement reliable AI in government relations, one must: define the scope of key activities, map the company's regulatory and political context, establish clear parameters for relevance and risk, and maintain a constant human validation flow.

AI should be an accelerator of analysis, not a substitute for strategic judgment. When well-structured, reliable AI amplifies the ability to monitor the political-regulatory environment, anticipate changes, and protect or expand the organization's operating space.

6. Hyper-specialization as a model of trust

Sigalei, for example, applies the concept of hyper-specialization: AI agents are designed to perform very specific tasks in the government and political-regulatory relations flow.

Instead of a single robot for “everything”, several specialized agents act in distinct stages, calibrated to the organization's context. This allows each stage to operate with greater precision, avoiding noise and increasing the quality of insights that support strategic decision-making.

At Sigalei, we understand that reliability in artificial intelligence is only possible with hyper-specialization. Therefore, we structure our AI agents to perform specific micro-activities in government relations workflows.

Each task, from identifying strategic themes to generating summaries and alerts, is designed with precision and calibrated to the appropriate context. This care ensures not only greater reliability but also more agility and intelligence in the decision-making process.

Do you want to apply AI safely in your government relations process?

Talk to our team! Let's discuss how to transform your operation with customized, reliable, and precise AI solutions.